Data Migration Guide

This guide covers how existing cryptographic finding data is migrated from v3 to v3.5 when upgrading the AgileSec Analytics platform. It explains the available migration options, how the migration tool works, and what happens to data produced by older sensors that have not been upgraded yet.

Overview

This guide covers how existing cryptographic finding data is migrated from v3 to v3.5 when upgrading the AgileSec Analytics platform. It explains the available migration options, how the migration tool works, and what happens to data produced by older sensors that have not been upgraded yet.

Data Flow

The data path differs depending on the sensor version. Understanding these paths is important when planning your migration strategy, as each sensor version writes data differently and to different indexes.

v3.5 Sensors

Sensor data is received by the Ingestion Services and published to the new v3.5 Kafka topics. The Indexing Service consumes from v3.5 topics, performs schema validation, performs any enrichments, and writes the data to the v3.5 OpenSearch indexes.

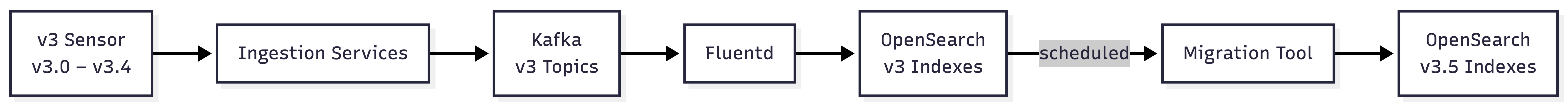

v3 Sensors (v3.0 - v3.4)

Sensor data is received by the Ingestion Services and published to the v3 Kafka topics. Fluentd consumes from v3 Kafka topics, performs transformations and enrichments, and writes the data to the old v3 OpenSearch indexes. The migration tool runs on schedule and keeps converting v3 sensor data into the v3.5 format.

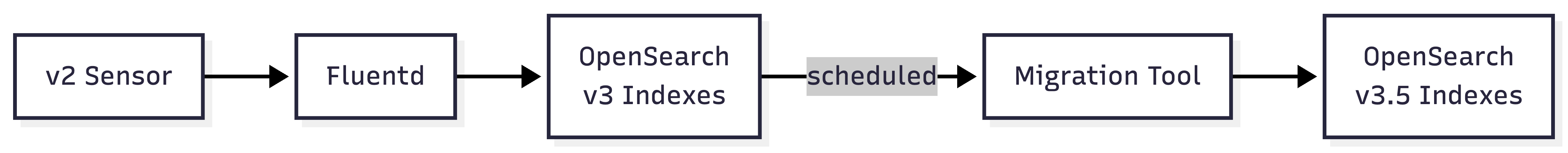

v2 Sensors (legacy)

Sensor data is sent directly to Fluentd, which writes it to the old v3 OpenSearch indexes. The migration tool runs on schedule and converts this data into the v3.5 format.

⚠️ Security Notice: Enabling v2 sensor support exposes Fluentd without authentication. This means any client that can reach Fluentd can write data to the old indexes. It is recommended to restrict network access to Fluentd and migrate v2 sensors to v3.5 as soon as possible to eliminate this exposure.

Migration Options

AgileSec Analytics includes a migration tool to read data from the old v3 indexes, transform each document to the v3.5 schema, and insert it into the corresponding v3.5 index.

Two migration options are available, depending on whether backwards compatibility for any existing integrations is required. In both options, pre-3.5 sensors can continue sending data to the old index; the migration tool will automatically convert incoming data to the v3.5 index on schedule.

ℹ️

MIGRATION_KEEP_SOURCEcontrols which option is used. The default istrue, old data is retained after migration unless explicitly set tofalse.

Option 1 – Migrate and Keep

The original v3 documents are retained in the old index after migration and marked with migrated: true. This option maintains backwards compatibility with existing integrations reading from the old v3 index.

ℹ️ MIGRATION_KEEP_SOURCE controls which option is used. It defaults to true, old data is retained after migration unless explicitly set to false.

Choose this option if:

You are using the Search API to read data from the old v3 index and cannot update your code immediately – this option keeps the old index available until your integration has been migrated to use the v3.5 format

A gradual rollout is preferred, where old and new indexes coexist

To enable, set the following in config_envs/scheduler:

MIGRATION_KEEP_SOURCE=trueOption 2 – Migrate and Delete

The original v3 documents are deleted from the old index after migration. This keeps the environment clean and reduces storage overhead.

Choose this option if:

You do not require backwards compatibility for the Search API: either your code has already been updated to query the v3.5 index format, or you were not using the Search API against the old index.

You still have pre-3.5 sensors in use that are not yet ready to be upgraded: the migration tool will continue to migrate their data from the old index to v3.5 on schedule.

To enable, set the following in config_envs/scheduler:

MIGRATION_KEEP_SOURCE=falseRunning the Migration Tool

The tool can be run in two ways:

Manual Bootstrap – Run once after upgrading to AgileSec 3.5 to migrate all existing data in the old indexes. This is the recommended first step after an upgrade.

Automatic Data Migration – The tool runs on a schedule every 60 minutes by default, picking up any new data that has arrived in the old indexes since the last run. This enables live migration — pre-3.5 sensors that have not yet been upgraded can continue sending data to the old index and it will be converted to v3.5 automatically. To enable, set

MIGRATE_ENABLED=trueandv2_sensors=enabledinconfig_envs/scheduler.

Usage

The migration tool is part of the isg_tools binary, located in the bin directory.

./bin/isg_tools migrate [flags]Flags:

Flag | Description |

|---|---|

| Path to the application configuration file. Defaults to |

| Disables continuous execution. Run once and exit. Use this for a manual bootstrap. |

| Prints the application version. |

| Prints help. |

Running as a manual bootstrap:

bash

./isg_tools migrateConfiguration

The migration tool is configured via config_env/migrate.json. The relevant section is migrate:

{

"opensearch": {

"root_ca_path": "{{ROOT_CA_FILEPATH}}",

"cert_path": "{{OPENSEARCH_ADMIN_USER_CERT_FILEPATH}}",

"key_path": "{{OPENSEARCH_ADMIN_USER_KEY_FILEPATH}}",

"insecure": false,

"address": "{{OPENSEARCH_INTERNAL_URL}}"

},

"scheduleInfo": {

"orgDb": "{{ORG_DOMAIN_UNDERSCORE}}"

},

"isg_tools": {

"migrate": true

},

"migrate": {

"batch_size": 10000,

"max_loops": 0,

"keep_source": true,

"drop_migrated_fields": true,

"crypto_types": ["x509", "key", "protocol", "algorithm", "token", "keystore", "library", "db", "sourcecode"],

"scroll_timeout_minutes": 5,

"op_workers": 4,

"transform_workers": 8,

//"sensor_types": ["Host Filesystem", "GIT Repository"]

},

"sources": {

"x509": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-certificate-2023",

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-certificate-v2",

"isg-event-certificate-2023",

"isg-event-certificate-2024",

"alias-isg-event-certificate"

],

"key": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-key-2023",

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-key-v2",

"isg-event-key-2023",

"isg-event-key-2024",

"alias-isg-event-key"

],

"protocol": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-protocol-2023",

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-protocol-v2",

"isg-event-protocol-2023",

"isg-event-protocol-2024",

"alias-isg-event-protocol"

],

"algorithm": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-algorithm-2023",

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-algorithm-v2",

"isg-event-algorithm-2023",

"isg-event-algorithm-2024",

"alias-isg-event-algorithm"

],

"sourcecode": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-sourcecode-2023",

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-sourcecode-v2",

"isg-event-sourcecode-2023",

"isg-event-sourcecode-2024",

"alias-isg-event-sourcecode"

],

"token": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-token-2023",

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-token-v2",

"isg-event-token-2023",

"isg-event-token-2024",

"alias-isg-event-token"

],

"keystore": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-keystore-2023",

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-keystore-v2",

"isg-event-keystore-2023",

"isg-event-keystore-2024",

"alias-isg-event-keystore"

],

"library": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-library-2023",

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-library-v2",

"isg-event-library-2023",

"isg-event-library-2024",

"alias-isg-event-library"

],

"db": [

"{{ORG_DOMAIN_UNDERSCORE}}-isg-event-db-2023"

]

}

},

"environment": "production",

"opensearch_debug": false,

"http_debug": false,

"debug": false

}Configuration reference:

Field | Description |

|---|

Field | Description |

|---|---|

| Path to the root CA certificate for mTLS. |

| Path to the admin user certificate for mTLS. |

| Path to the admin user private key for mTLS. |

| Set to |

| OpenSearch internal URL. |

| Organization domain with dots replaced by underscores. e.g. |

| Set to |

| Number of documents to read from OpenSearch per batch. Maximum |

| Maximum number of batch loops per run. Set to |

|

|

| When |

| Target schema version. Should be |

| List of crypto object types to migrate. Possible values: |

| OpenSearch scroll context timeout in minutes. |

| Number of parallel write workers to OpenSearch. Controls OpenSearch load. |

| Number of parallel local transform workers. Controls local CPU load. |

| Array of sensor types to migrate. If not specified, all sensors types will be migrated. |

| How often the tool checks for new data to migrate in minutes. Used when running continuously. |

| array of old index name mappings. Update this only if your index names are not listed. |

|

|

| When |

| When |

.png)